Training Data

No training data is available from the CUBDL organizers for the reasons stated here. However, plane wave data that can be used for network training is available on the PICMUS website.

Updated July 5, 2021: A subset of contributed data not selected by the CUBDL organizers for evaluation of Task 1 are included in our open dataset for potential future training of new networks. These datasets include both focused and plane wave data and are available by requesting access on the CUBDL DataPort site.

Beamforming Help

The Ultrasound Toolbox can be used to help with beamforming training data.

Code Structure

Added on April 24, 2020: We are providing example code here that shows how the data will be organized. Plane wave and focused transmit raw data will be provided to the user as Python classes. We will also provide a pixel grid that contains the coordinates for each reconstructed pixel.

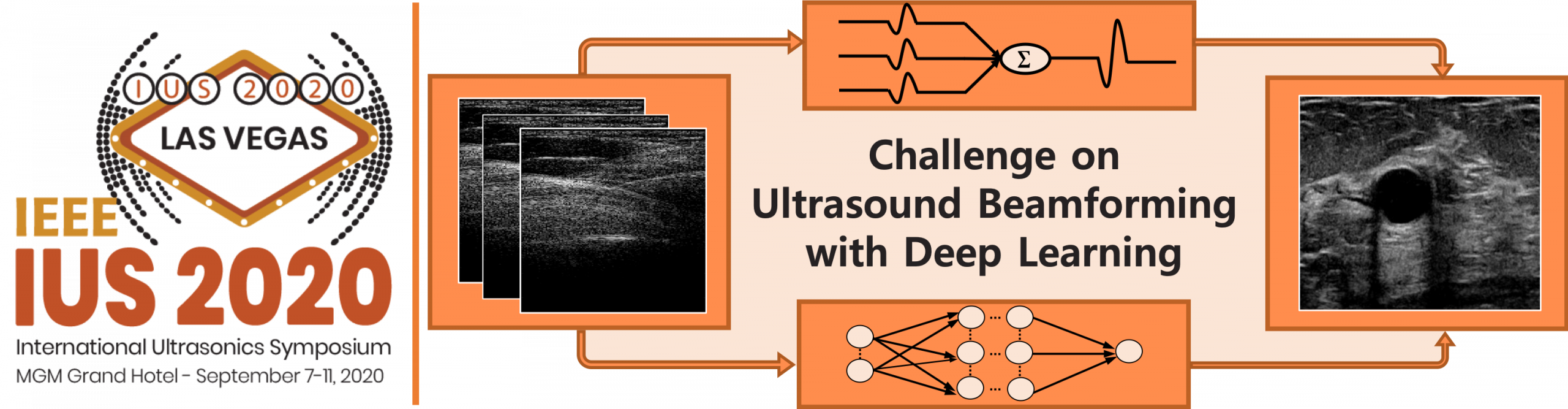

Contestants should implement models that take the appropriate data object and grid as input and produce the final beamformed image as output, e.g., perform delay-and-sum (DAS) beamforming. As starter code, we provide a PyTorch implementation of DAS that converts the raw data the PICMUS challenge into beamformed images:

Contestants are free to incorporate deep learning at any point in the image reconstruction pipeline, whether it be before, during, or after DAS. The only requirement is that the final output of the model be image data (or IQ data that will be converted into image data, as in the example).

We also provide functions for some of the image metrics, although it is up to the contestant to select the proper regions of interest (ROIs) in the image to be used with the metrics. Note that during model evaluation, we will specify the ROIs via the “grid” input.

Test Data

Before getting started, we strongly encourage participants to review the CUBDL Data Guide, which provides detailed information about the test data that will be used for participant evaluation. Trained networks should be able to generalize across the range of parameters provided in the CUBDL Data Guide. For example, the acquisition center frequencies range from 2.5 MHz to 12.5 MHz, and the sampling frequencies range from 10 MHz to 78.125 Hz. Networks should be able to beamform images within this range of frequencies. The ultrasound transducers consist of linear and phased arrays, and therefore networks should also be able to generalize across these transducer types. See the CUBDL Data Guide for more details on expected parameter ranges.

Test data will consist of the following datasets (more details in the CUBDL Data Guide):

- 49 experimental phantom data sequences acquired with plane wave transmissions

- 25 in vivo data sequences from the heart of thirteen patients, the carotid of two healthy volunteers and the brachioradialis of a healthy volunteer, each acquired with plane or diverging wave transmissions

- 6 experimental phantom data acquired with focused transmissions

- 24 in vivo data sequences from the breast of ten patients, the carotid of two healthy volunteers, and the heart of a healthy volunteer, each acquired with focused transmissions

- 2 Field II simulations

Release of Test Data

Test data will not be released to the participants while the challenge is active, and we instead share the CUBDL Data Guide for assistance with network training. Upon completion of the challenge, we intend to release all unrestricted test data in order to provide a useful reference and benchmark dataset for future follow-on work. More details on our future plans are available here.

Updated December 13, 2020: Datasets are now available for release by requesting access on the CUBDL DataPort site.

Updated July 5, 2021: Our journal paper describing the totality of released CUBDL-related datasets was accepted for publication! D. Hyun, A. Wiacek, S. Goudarzi, S. Rothlübbers, A. Asif, K. Eickel, Y. C. Eldar, J. Huang, M. Mischi, H. Rivaz, D. Sinden, R.J.G. van Sloun, H. Strohm, M. A. L. Bell, Deep Learning for Ultrasound Image Formation: CUBDL Evaluation Framework & Open Datasets, IEEE Transactions on Ultrasonics, Ferroelectrics, and Frequency Control (accepted July 1, 2021) [pdf]